GPT‑5.3 Codex Spark vs GPT‑5.3 Codex: Comprehensive analysis

In February 2026, OpenAI released two closely related—but strategically different—members of the “Codex” family: GPT-5.3-Codex (a high-capability agentic coding model) and GPT-5.3-Codex-Spark (a smaller, ultra-low-latency variant optimized for interactive coding). Together they represent OpenAI’s dual approach to serving both “deep thinking” and “fast doing” in software engineering workflows: one model that pushes the ceiling of coding intelligence and tool-driven agentic behavior, and one that prioritizes real-time interactivity for developer-facing UI.

CometAPI now integrates with GPT-5.3 Codex, which you can use via API. CometAPI's discounts and service philosophy will surprise you.

What are GPT-5.3-Codex and GPT-5.3-Codex-Spark?

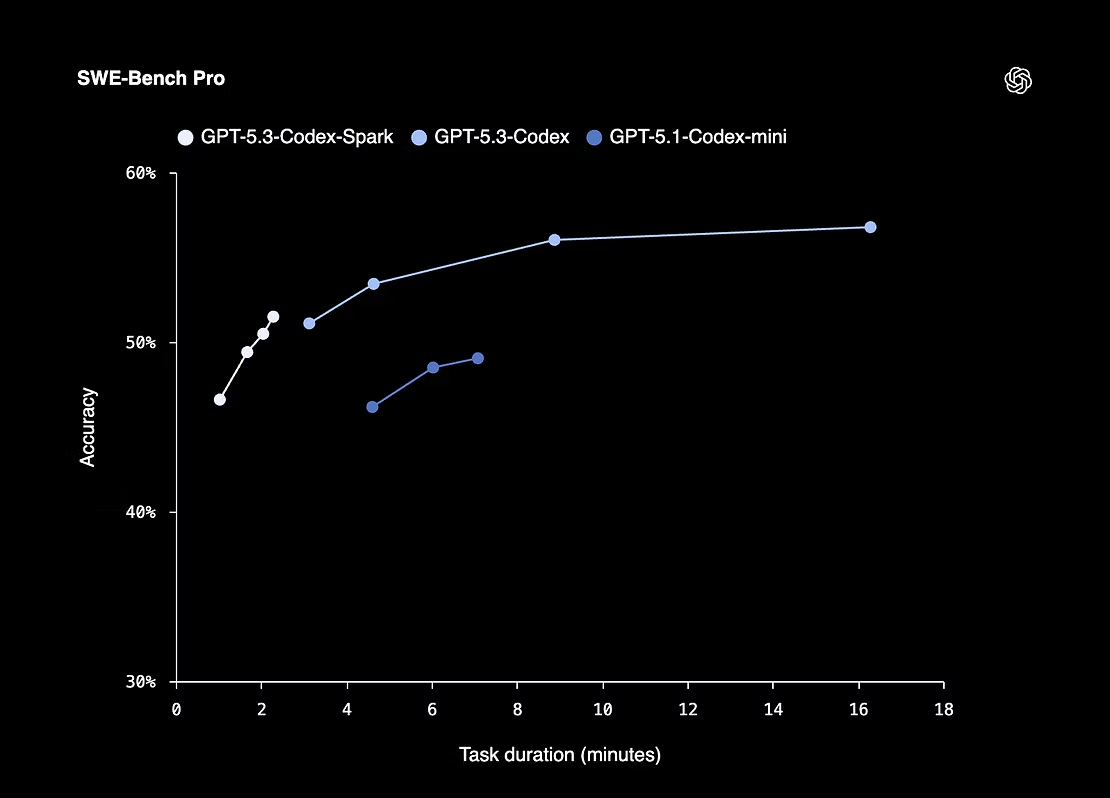

GPT-5.3-Codex is the latest "frontier" coding agent from OpenAI. It combines advanced coding abilities with general reasoning and is explicitly designed for long-horizon, agentic tasks that involve researching, using tools, running terminal commands, iterating across many tokens, and managing multi-step software projects. OpenAI reports state-of-the-art results on multi-language engineering benchmarks such as SWE-Bench Pro and Terminal-Bench 2.0 and highlights that GPT-5.3-Codex can be used to debug, deploy, and even assist in its own development workflows.

GPT-5.3-Codex-Spark is a smaller, latency-optimized variant intended for interactive, real-time coding experiences. Spark was co-developed to run on wafer-scale hardware from Cerebras, enabling throughput exceeding 1,000 tokens per second and a 128k token context window for the initial release. It is positioned as a companion model: extremely fast for inline edits, boilerplate generation, quick refactors, and short-hop tasks — but intentionally lighter in reasoning depth than the standard Codex.

Why have two models? The split reflects a practical product trade-off: teams want both (a) a deep, capable agent that can plan and reason across a huge problem space, and (b) a near-instant collaborator that keeps a developer in flow. The evidence suggests these should be used together in a hybrid workflow, not as direct replacements for one another.

GPT‑5.3 Codex Spark vs Codex: architectures and deployments

What hardware supports each model?

- GPT-5.3-Codex (standard): co-designed, trained, and served primarily on NVIDIA GB200 NVL72 GPUs and the associated inference stack that supports deep reasoning and very large parameter counts. This infrastructure favors model capacity over sub-millisecond latency.

- GPT-5.3-Codex-Spark: runs on Cerebras Wafer-Scale Engine (WSE-3) hardware. Cerebras’ architecture trades extreme on-chip bandwidth and low latency for a different capacity profile: the Spark variant is physically smaller/pruned to map to the wafer’s SRAM requirements while delivering much higher token throughput.

How does model size and parameterization differ?

Spark achieves its speed through pruning/distillation and a smaller parameter footprint so the model can fit and run efficiently on WSE-3. That design choice creates the expected performance trade-off: much higher throughput at lower per-token reasoning depth.

What about context windows and token handling?

- GPT-5.3-Codex — 400,000 token context window in the developer entry for the GPT-5.3-Codex model. This makes the standard model exceptionally good at long-running projects where the model must reason across thousands of lines and many files.

- GPT-5.3-Codex-Spark — the research preview launches with a 128k token context window; large but smaller than the standard Codex. The window is still huge relative to everyday IDE snippets, but the combination of a slightly smaller window plus smaller compute implies limitations in deep, multi-file code synthesis.

GPT‑5.3 Codex Spark vs Codex: coding benchmarks and latency

Below are the most load-bearing public datapoints:

- GPT-5.3-Codex (standard): OpenAI published benchmark numbers in their release: Terminal-Bench 2.0 score 77.3%, SWE-Bench Pro 56.8%, OSWorld 64.7%, GDPval wins/ties 70.9% and other task scores highlighted in their appendix. These numbers position GPT-5.3-Codex as a new leader in multi-language, agentic software engineering tasks.

- GPT-5.3-Codex-Spark: OpenAI emphasizes >1000 tokens/sec throughput and strong task completion speed, while independent analyses and community benchmarks (early adopters) report significant reductions in terminal reasoning accuracy on complex tasks compared to the full model. One independent analysis quantifies a Terminal-Bench estimated score of ~58.4% for Spark (versus 77.3% for standard), showing the practical trade-off between speed and correctness on complex terminal tasks.

Interpretation: for short, well-scoped tasks — e.g., small edits, unit test generation, regex or syntax fixes — Spark’s latency makes the human-AI loop smoother and increases developer throughput. For architecting systems, debugging complex integration errors, or agentic multi-step workflows, the standard GPT-5.3-Codex’s higher reasoning accuracy is materially superior.

Why does GPT‑5.3 Codex Spark feel so much faster ?

Is this purely a hardware trick?

Partly. The Cerebras WSE-3 used for Spark eliminates much of the memory-movement latency by keeping large buffers of data on-chip and providing enormous memory bandwidth. But hardware alone would not be enough — OpenAI created a distilled/pruned variant that maps to the wafer’s SRAM and compute profile. That combination (smaller model + wafer-scale low latency) produces the real-time behavior.

What’s the cost of pruning/distillation?

Distillation reduces parameter count or model depth and can remove some capacity for multi-step reasoning. Practically this manifests as:

- weaker performance on complex terminal tasks requiring chained deductions;

- higher probability of subtle logic or security errors for long or deeply linked code changes;

- fewer internal “what I’m thinking” tokens (i.e., less chain-of-thought reasoning when not explicitly requested).

That said, Spark excels at targeted edits and high-bandwidth recall — the type of assistance that keeps a developer typing without interruption.

What does this mean for product teams and developers?

When should you call Spark vs standard Codex?

- Call Spark when you need: instant inline completions, interactive refactoring, CI quick checks, unit-test scaffolding, syntax repair, or real-time code suggestions that must not break a user’s flow. Spark’s sub-second generations make the UI feel seamless.

- Call standard GPT-5.3-Codex when you need: architecture design, complex bug triage, multi-file reasoning, long-running agents, security/hardening checks, or operations where first-pass correctness reduces expensive verification.

Suggested hybrid workflows

- Use Spark as a “tactical” sub-agent for short edits and to maintain developer flow (map to a keyboard shortcut or inline button in an IDE).

- Use GPT-5.3-Codex as the “strategic” planner: for PR generation, refactor proposals, refactoring plans that require deep context, or when running thorough security checks.

- Implement “hybrid mode”: automatically route short, syntax/style prompts to Spark and escalate discussions or multi-step requests to standard Codex. OpenAI is exploring hybrid routing, but you can implement it client-side now.

Prompting & operational best practices

- Start with small, targeted prompts in Spark and escalate to Codex for full refactors or where correctness is critical. That hybrid pattern gives the best UX (Spark for drafts, Codex for verification & finalization).

- Use streaming for UI interactions: show incremental tokens from Spark to create a “live” feel; avoid long synchronous calls that block the editor.

- Instrument verification tests: for any change that touches logic or security, require unit tests and prefer Codex to run or synthesize those tests. Automate a test-and-verify cycle where Spark proposes a change and Codex validates/finalizes it.

- Tune reasoning effort: many Codex endpoints provide a

reasoningor effort knob (e.g., low/medium/high/xhigh) — increase effort for tricky, high-impact tasks. - Cache & session management: for Spark-powered UIs, cache previous context tokens efficiently and send only the delta to minimize per-request latency and token usage.

- Safety first: follow the vendor system card/Governance guidance for high-risk domains (cyber, bio, etc.) — Codex’s system card explicitly documents additional safeguards and preparedness steps when models reach high capability in certain domains.

There are two common patterns: (A) an interactive streaming call to Codex-Spark for inline completions, (B) a more agentic, higher-effort request to GPT-5.3-Codex for a long-running refactor/agent task.

A) Example — streaming inline completions with Codex-Spark (Python)

# Pseudocode / illustrative example# Install: pip install openai (or use official SDK)import openaiopenai.api_key = "YOUR_API_KEY"# Use a hypothetical streaming endpoint that favors low latency.# Model name is illustrative: "gpt-5.3-codex-spark"with openai.ChatCompletion.stream( model="gpt-5.3-codex-spark", messages=[ {"role": "system", "content": "You are a fast, precise coding assistant."}, {"role": "user", "content": "In file app.py, refactor this function to be async and add type hints:\n\n<paste code here>"} ], max_tokens=256, stream=True) as stream: for event in stream: if event.type == "output.delta": print(event.delta, end="") # print incremental completions for instant UI elif event.type == "response.completed": print("\n[done]")

Why this pattern? Streaming + small max_tokens keeps iterations snappy in the editor. Use Spark when you want sub-second, incremental completions.

B) Example — agentic, long-running task with GPT-5.3-Codex (Python)

# Pseudocode for a multi-step agent request: run tests, find failing module, write fix, create PRimport openaiopenai.api_key = "YOUR_API_KEY"response = openai.ChatCompletion.create( model="gpt-5.3-codex", messages=[ {"role":"system", "content":"You are an engineering agent. You can run tests and edit files given repo access."}, {"role":"user", "content":"Take the repository at /workspace/myapp, run the test suite, and if any tests fail, create a minimal fix and return a patch plus a test that demonstrates the bug."} ], max_tokens=2000, reasoning="xhigh", # Codex supports effort settings: low/medium/high/xhigh tools=["shell","git"], # illustrative: agent tools for real actions stream=False)# The response may include a multi-step plan, diffs, and tests.print(response.choices[0].message.content)

Why this pattern? Codex’s reasoning modes (low→xhigh) let you trade latency for careful multi-stage planning; it’s designed for higher-risk, longer-horizon tasks where you want the model to orchestrate tools and preserve state across steps.

Conclusion: which model “wins”?

There is no single winner — each model targets complementary parts of the software engineering lifecycle. GPT-5.3-Codex is the better choice when correctness, long-horizon reasoning, and tool orchestration matter. GPT-5.3-Codex-Spark wins where preserving developer flow and minimizing latency are paramount. For most organizations, the correct strategy is not an either/or decision but an integrated one: use Codex as the architect and Spark as the mason. Early adopters already report productivity gains when both models are wired into the toolchain with robust verification.

All Rights Reserved